In March 2026, at its annual GTC conference, Nvidia made a bold statement: the future of AI is no longer just about training models, it’s about running them at scale. CEO Jensen Huang called this moment an “inference inflection point,” signaling a major shift toward real-time AI execution and autonomous agents.

This change reflects how AI is actually being used today. Businesses now need faster responses, lower costs, and smarter automation. From AI copilots to autonomous systems, demand for inferencing is exploding. Nvidia is responding by building the infrastructure for this next phase. The result? A strategic pivot that could redefine the entire AI economy, and who leads it.

From Training to Inference: Why Nvidia Is Changing Course?

Nvidia’s long‑standing strength has been in AI model training, building large language models and neural networks using massive GPU clusters. However, at GTC 2026 in San Jose this March, CEO Jensen Huang called out a new phase: “the inference inflection has arrived.” This means the focus is shifting from training models to running them efficiently at scale.

Inference refers to the phase where a trained AI model is used to generate output, answer questions, or perform tasks in real time. Huang noted that demand for inference is surging because businesses want AI that works now, not just models that are trained.

This shift reflects broader industry trends. Modern AI applications, like autonomous agents, real‑time assistants, and enterprise AI tools, require fast, low‑latency inferencing. Nvidia’s strategy now aims to build infrastructure that supports these workloads, not just training clusters.

What Is AI Inferencing and Why It Matters?

AI inference is the process of running a trained model to make predictions or generate outputs. It happens when users interact with AI apps, chatbots, or autonomous systems. Unlike training, which happens in controlled data centers and is done less frequently, inference happens constantly. It must be fast, reliable, and cost‑efficient. Enterprises now care more about speed and efficiency because real‑world AI use cases depend on instant responses.

Inferencing matters because it’s where the value of AI is unlocked for users. Models must run millions of queries per day in customer support, medical diagnosis, enterprise workflows, and more. Nvidia’s focus on this phase reflects where businesses are investing their budgets.

Rise of Agentic AI: Nvidia’s Big Bet on Autonomous Systems

What are AI Agents?

AI agents are systems that do more than respond to prompts. They can make decisions, plan steps, execute tasks, and adjust behavior as they operate. These systems are seen as the next wave of AI applications. At GTC 2026, Nvidia emphasized that agents are a major frontier, completing the move from passive tools to active, autonomous software assistants.

NemoClaw: Nvidia’s Enterprise Agent Stack

Nvidia unveiled NemoClaw, its customized version of the popular open‑source OpenClaw agent framework. NemoClaw includes security features like privacy routers and network guardrails to make agentic systems safer in business environments. It allows developers to run agents that can learn, self‑evolve, and act without constant human input.

NemoClaw is part of a broader Nvidia stack that also includes the AI‑Q open blueprint and Nemotron open models, all designed to help developers build and deploy agentic AI at scale. This signals Nvidia’s push beyond hardware into the software layer of autonomous AI infrastructure.

Hardware Reinvented for Inference and Agents

Vera Rubin Platform: The AI Supercomputer Stack

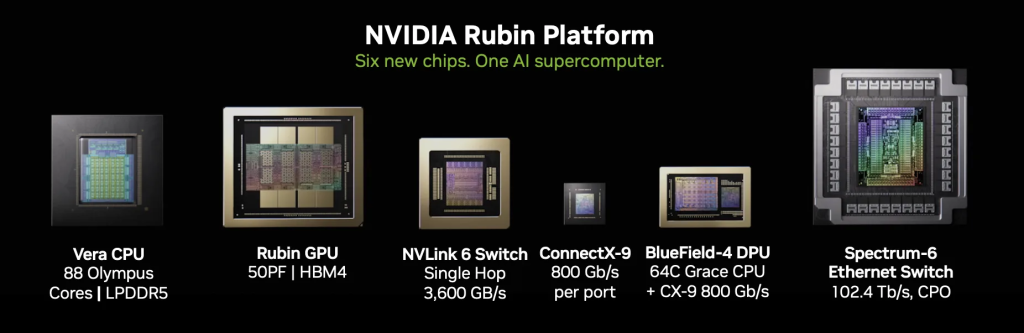

At GTC 2026, Nvidia highlighted the Vera Rubin platform, a full stack that combines CPUs, GPUs, networking, and accelerators tailored for inference and agent workloads. The Rubin GPU microarchitecture is set to launch in the second half of 2026, promising big improvements in inference performance and efficiency.

Rubin will work alongside Nvidia’s new Vera CPU, designed to accelerate agentic systems that require both parallel processing and general‑purpose compute. This hybrid approach helps reduce bottlenecks in real‑time AI operations.

Solving Bottlenecks: Storage and Latency

Nvidia also unveiled the BlueField‑4 STX storage architecture, designed to eliminate data bottlenecks common in large‑context inference tasks. This new system offers up to 5x token throughput and up to 4x energy efficiency, addressing a key challenge in agent workloads that must maintain massive context histories.

Strategic Partnerships Powering the Shift

Nvidia’s AI strategy extends beyond its own products. At GTC 2026, the company announced partnerships with major players like Salesforce, AWS, and NTT Data to accelerate enterprise AI adoption and real‑world deployment. These alliances aim to make Nvidia’s technology central to AI workflows across industries.

Nvidia also integrated technology from AI chip specialist Groq into its inference systems. The deal helps Nvidia boost its capability to serve real‑time inference workloads globally, including in markets like China.

The Economics of AI are Changing Fast

Industry analysts believe that Nvidia’s pivot toward inference and agent infrastructure could fuel massive growth. CEO Jensen Huang projected up to $1 trillion in demand for Nvidia’s AI chips by 2027, a figure that surpasses previous forecasts. This includes Blackwell and Vera Rubin‑based systems, driven by enterprise and hyperscaler demand.

This reflects a broader shift in AI economics: companies now earn more from running AI models than just building and training them. That means more spending on inference hardware, software stacks, and agent management tools. Analysts are watching this trend closely as Nvidia and others invest in infrastructure that supports this next phase. AI stock analysis and forecasting tools also highlight this shift as a key driver of future valuations.

Real‑World Use Cases: Where Agents + Inference are Winning

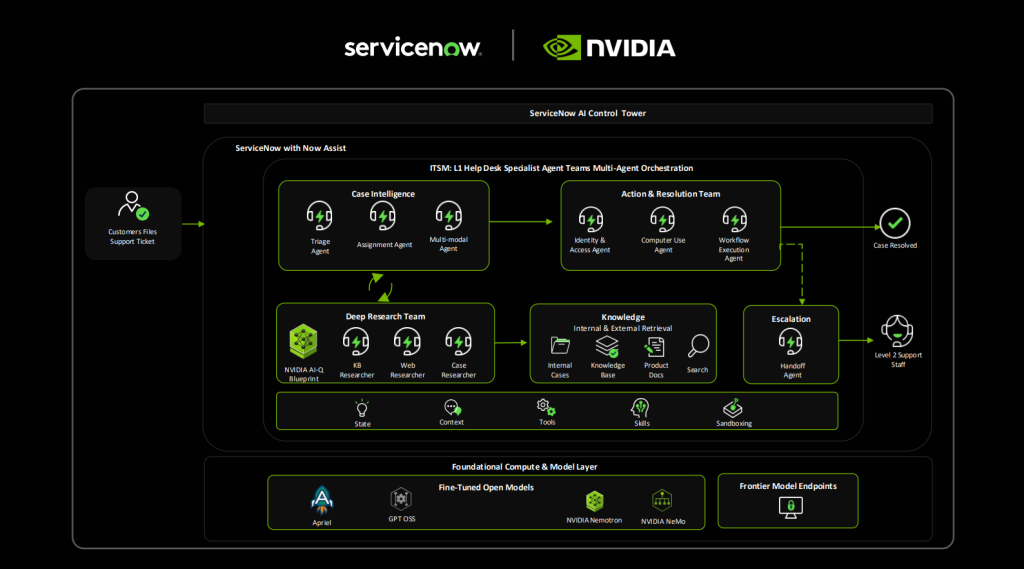

AI agents and advanced inference are already showing value in real settings. For example, autonomous software assistants can handle complex tasks like workflow automation, customer service orchestration, and real‑time data analysis without human supervision. Enterprise platforms like ServiceNow are beginning to integrate Nvidia’s agent stack into production systems.

In sectors like marketing and research, these agents automate tasks like content generation, data summarization, and competitive monitoring, workloads that require both reasoning and continuous inference.

Competitive Pressure: Can Nvidia Stay Ahead?

Nvidia is not alone in this race. Cloud giants like Google and Amazon are building their own inference chips and AI software ecosystems. Competition also comes from open‑source communities pushing alternative agent frameworks. Despite this, Nvidia’s deep ecosystem, spanning hardware, software, and partnerships, gives it a strong position.

However, success depends on execution, developer adoption, and security solutions that build enterprise trust, especially as agentic systems become more autonomous and integrated into core business functions.

Final Words

Nvidia is shifting its focus to inferencing and AI agents at the right time. AI is moving into real-world use. Speed and efficiency now matter most. With strong hardware, software, and partnerships, Nvidia is well-positioned. However, rising competition and high costs remain key risks. The next phase of AI growth will depend on execution, not just innovation.

Disclaimer:

The content shared by Meyka AI PTY LTD is solely for research and informational purposes. Meyka is not a financial advisory service, and the information provided should not be considered investment or trading advice.

What brings you to Meyka?

Pick what interests you most and we will get you started.

I'm here to read news

Find more articles like this one

I'm here to research stocks

Ask our AI about any stock

I'm here to track my Portfolio

Get daily updates and alerts (coming March 2026)