Google Launches TurboQuant AI Memory Compression algorithm, Dubbed ‘Pied Piper’ Online

On March 25, 2026, Google introduced TurboQuant, a new AI memory compression algorithm that is already making headlines across the tech world. Early reports show it can cut AI memory use by at least six times while keeping accuracy unchanged. Even more impressive, it may boost performance by up to 8x in some cases.

The innovation targets a major problem in modern AI, massive memory consumption that slows systems and raises costs. TurboQuant uses advanced quantization techniques to shrink data without losing quality.

No surprise, the internet quickly nicknamed it “Pied Piper,” comparing it to the famous compression breakthrough from Silicon Valley. And now, everyone wants to know, could this change the future of AI?

What Is Google TurboQuant?

TurboQuant is a new AI memory compression system developed by Google in March 2026. It helps AI models use less memory while working. This means systems can handle more data without needing extra hardware.

In simple terms, it “shrinks” the memory AI uses during tasks like answering questions or generating text. It does this without reducing quality or accuracy.

Official Definition & Purpose

Google describes TurboQuant as a quantization-based compression algorithm for large AI models. It focuses on improving KV cache efficiency, which stores temporary data during AI processing. Its main goal is to reduce memory usage, improve speed, and lower costs. This helps scale AI systems more easily across industries.

Why Is It Called ‘Pied Piper’ Online?

Why are people comparing TurboQuant to Pied Piper?

The nickname comes from the TV show Silicon Valley. In the show, a startup called Pied Piper creates a powerful compression algorithm.

Why does the comparison make sense?

TurboQuant shows similar results in real life. It offers high compression without losing accuracy. Tech users on social media quickly noticed the similarity. The term “Pied Piper moment” started trending on March 25-26, 2026. This mix of tech and pop culture helped the news go viral fast.

How TurboQuant Works – Core Technology Explained

What happens inside TurboQuant?

TurboQuant combines two main techniques to compress AI memory efficiently.

Step 1 – PolarQuant Compression

- Converts data into polar coordinates instead of standard formats

- Removes the need for extra normalization steps

- Stores information more efficiently in fewer bits

Step 2 – QJL Optimization Layer

- Uses Quantized Johnson-Lindenstrauss (QJL) method

- Compresses leftover data into very small representations

- Keeps important patterns intact

Combined Impact

These methods work together to reduce memory use without harming results.

- Targets KV cache, which is a major memory load in AI models

- Maintains near zero accuracy loss

- Improves processing speed during real-time tasks

This design makes TurboQuant highly efficient for modern AI workloads.

Key Benefits of TurboQuant – Data + Stats

What are the biggest advantages?

TurboQuant offers strong performance improvements based on early reports from March 2026:

- Reduces memory usage by up to 6x

- Improves inference speed by up to 8x in test environments

- Maintains accuracy levels close to original models

Why does this matter for businesses?

- Lower cloud computing costs

- Reduced need for expensive GPUs

- Better performance for large-scale AI apps

Other key benefits

- Supports longer context windows (important for chatbots and analysis tools)

- Improves energy efficiency

- Makes AI more accessible for startups and SMEs

This is especially important as companies scale AI systems globally.

Why Does This Matters for the AI Industry?

How does TurboQuant solve AI scaling issues?

AI models today require huge memory to process large inputs. The KV cache alone can take a major share of resources. TurboQuant reduces this burden. It allows models to run efficiently without adding hardware.

What is the impact on AI costs and infrastructure?

- Companies can run AI models at lower cost

- Cloud providers may optimize pricing models

- Startups can deploy AI faster with fewer resources

What about the chip industry reaction?

After the announcement on March 25, 2026, some memory-related stocks showed short-term pressure. Analysts believe:

- Demand for high-end chips may shift

- Efficiency improvements could reduce dependency on large memory setups

Where does AI stock analysis fit in?

Tools like an AI stock analysis tool can help investors track how such innovations impact semiconductor and cloud companies in real time.

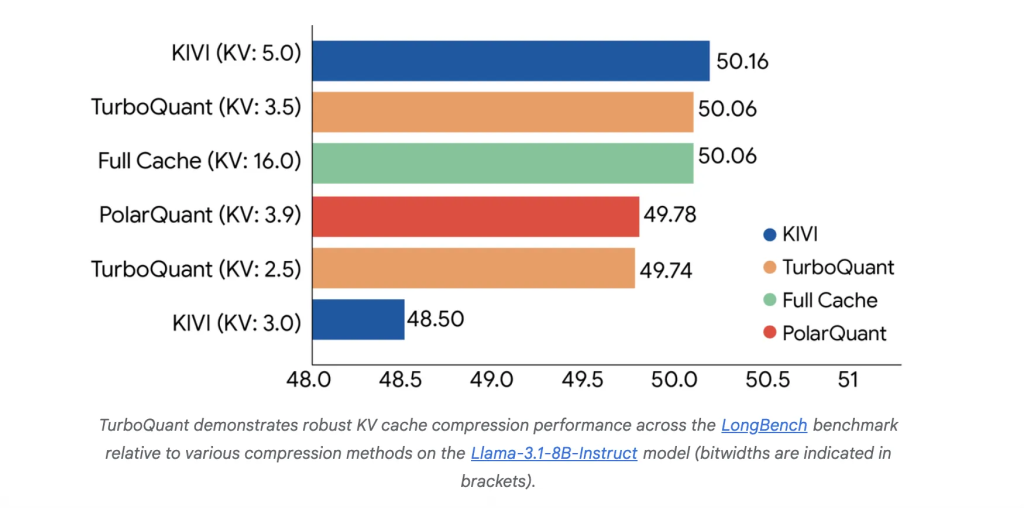

TurboQuant vs Existing AI Compression Methods

What are the limits of traditional methods?

Older compression techniques often:

- Add extra data overhead

- Reduce model accuracy at high compression

- Slow down performance in real-world use

What makes TurboQuant different?

TurboQuant improves on these limits by:

- Removing unnecessary overhead through smarter encoding

- Using QJL to maintain accuracy

- Delivering strong compression without performance loss

This balance of efficiency + accuracy is rare. It gives TurboQuant a clear edge over older methods.

Limitations and Current Status

Is TurboQuant ready for full deployment?

Not yet. As of March 2026, it is still in the research stage.

What are the current limitations?

- Focuses mainly on inference memory, not training

- Real-world performance may differ from lab results

- Requires integration into major AI platforms

Adoption will depend on how quickly developers and cloud providers implement it. Still, early results show strong potential for practical use.

What’s Next? Future of AI Compression

What can we expect next?

TurboQuant is expected to be discussed at ICLR 2026, a major AI research conference.

Will Google integrate it into products?

Likely yes. It may appear in future versions of Google’s AI systems, including Gemini.

What trends could follow?

- Faster growth in edge AI and on-device AI

- More focus on efficient AI models

- New competition in AI optimization technologies

This could start a new wave of innovation in AI efficiency.

Wrap Up

Google’s TurboQuant is a major step toward efficient AI systems. It reduces memory use while keeping performance strong. This helps solve key scaling and cost challenges in AI. Although still early, its impact could be wide. If adopted at scale, TurboQuant may change how AI models are built, deployed, and optimized in the coming years.

Frequently Asked Questions (FAQs)

TurboQuant AI, launched by Google on March 25, 2026, compresses AI memory, using less space without losing performance.

TurboQuant AI maintains accuracy while reducing memory. Tests in March 2026 show AI models perform well with faster speed.

As of March 2026, TurboQuant is in the research stage. Public or commercial use will depend on platform adoption and testing.

Disclaimer:

The content shared by Meyka AI PTY LTD is solely for research and informational purposes. Meyka is not a financial advisory service, and the information provided should not be considered investment or trading advice.

What brings you to Meyka?

Pick what interests you most and we will get you started.

I'm here to read news

Find more articles like this one

I'm here to research stocks

Ask our AI about any stock

I'm here to track my Portfolio

Get daily updates and alerts (coming March 2026)